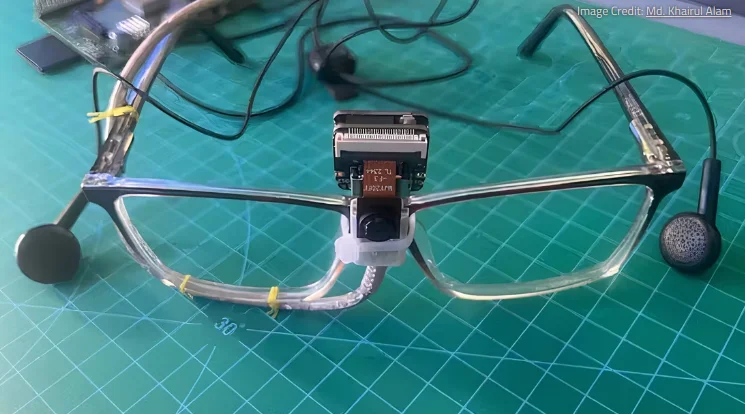

The idea behind this “third eye” project involves the patient wearing glasses with a little camera on them. This camera feeds information to a Seeed Studio XIAO ESP32S3 Sense and a Raspberry Pi 1 Model B+, which uses object recognition to work out what’s in front of the person. The boards then convert a text-based description of what’s going on in front of the wearer and relay it via text-to-speech through the headphones to the wearer.

It would be interesting to hear how the nature of what is described, audibly, is useful to the listener. But I’d imagine too that anything like this can be further trained and improved.

See https://www.xda-developers.com/raspberry-pi-ai-visually-impaired/